IBM open sources its Granite code models for code generative tasks, trained on 116 programming languages, with models ranging in size from 3B to 34B parameters (Mike Murphy/IBM Research)

https://research.ibm.com/blog/granite-code-models-open-source

IBM open sources its Granite code models for code generative tasks, trained on 116 programming languages, with models ranging in size from 3B to 34B parameters (Mike Murphy/IBM Research)

https://research.ibm.com/blog/granite-code-models-open-source

The 2nd Workshop on Recommendation with Generative Models

Wenjie Wang, Yang Zhang, Xinyu Lin, Fuli Feng, Weiwen Liu, Yong Liu, Xiangyu Zhao, Wayne Xin Zhao, Yang Song, Xiangnan He

https://arxiv.org/abs/2403.04399

Amazon launches Bedrock Studio in public preview, a web tool to help orgs experiment with and collaborate on generative AI models and then build AI-powered apps (Kyle Wiggers/TechCrunch)

https://techcrunch.com/2024/05/07/bedr

Ik lees weinig of niets de laatste maanden over hoe het nu gaat met het initiatief GPT-NL van onder andere TNO, SURF, NFI. Hoe staat het daarmee, hoe gaat men voldoende trainingsdata verzamelen van hoge kwaliteit, in lijn met onze regels en normen (Bigtech houdt zich daar niet aan) ? Wat wordt er eigenlijk ontwikkeld en op basis van welke modellen ?

Maar misschien heb ik nieuwsberichten gemist, zo ja, laat het me weten 🙂

Leveraging Active Subspaces to Capture Epistemic Model Uncertainty in Deep Generative Models for Molecular Design

A N M Nafiz Abeer, Sanket Jantre, Nathan M Urban, Byung-Jun Yoon

https://arxiv.org/abs/2405.00202

Wiz details two now-fixed security issues on the Hugging Face AI platform that put customer data at risk, as Hugging Face partners with Wiz to improve security (Kevin Poireault/Infosecurity)

https://www.infosecurity-magazine.com/news/wiz-discovers-fla…

Scaling Rectified Flow Transformers for High-Resolution Image Synthesis

Patrick Esser, Sumith Kulal, Andreas Blattmann, Rahim Entezari, Jonas M\"uller, Harry Saini, Yam Levi, Dominik Lorenz, Axel Sauer, Frederic Boesel, Dustin Podell, Tim Dockhorn, Zion English, Kyle Lacey, Alex Goodwin, Yannik Marek, Robin Rombach

https://…

"Huge generative #AI models like #ChatGPT [are] great at things I never would have expected an #LLM-based program to be good at, like writing certain kinds of computer programs, summarizing and editing text, and a whol…

CALRec: Contrastive Alignment of Generative LLMs For Sequential Recommendation

Yaoyiran Li, Xiang Zhai, Moustafa Alzantot, Keyi Yu, Ivan Vuli\'c, Anna Korhonen, Mohamed Hammad

https://arxiv.org/abs/2405.02429

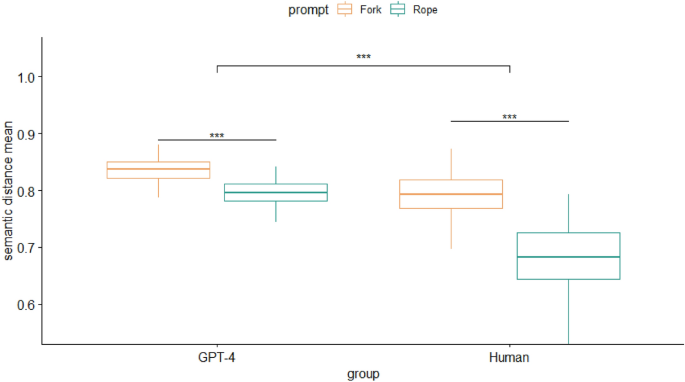

Whodunit: Classifying Code as Human Authored or GPT-4 Generated -- A case study on CodeChef problems

Oseremen Joy Idialu, Noble Saji Mathews, Rungroj Maipradit, Joanne M. Atlee, Mei Nagappan

https://arxiv.org/abs/2403.04013

MMGER: Multi-modal and Multi-granularity Generative Error Correction with LLM for Joint Accent and Speech Recognition

Bingshen Mu, Yangze Li, Qijie Shao, Kun Wei, Xucheng Wan, Naijun Zheng, Huan Zhou, Lei Xie

https://arxiv.org/abs/2405.03152

Solar synthetic imaging: Introducing denoising diffusion probabilistic models on SDO/AIA data

Francesco P. Ramunno, S. Hackstein, V. Kinakh, M. Drozdova, G. Quetant, A. Csillaghy, S. Voloshynovskiy

https://arxiv.org/abs/2404.02552

Leveraging Active Subspaces to Capture Epistemic Model Uncertainty in Deep Generative Models for Molecular Design

A N M Nafiz Abeer, Sanket Jantre, Nathan M Urban, Byung-Jun Yoon

https://arxiv.org/abs/2405.00202

KC-GenRe: A Knowledge-constrained Generative Re-ranking Method Based on Large Language Models for Knowledge Graph Completion

Yilin Wang, Minghao Hu, Zhen Huang, Dongsheng Li, Dong Yang, Xicheng Lu

https://arxiv.org/abs/2403.17532

Just wow...amazing website/visualization about LAION-5B , a large dataset a lot of generative AI models are trained on.

#AI

Physics-informed generative neural networks for RF propagation prediction with application to indoor body perception

Federica Fieramosca, Vittorio Rampa, Michele D'Amico, Stefano Savazzi

https://arxiv.org/abs/2405.02131

Fast, Scale-Adaptive, and Uncertainty-Aware Downscaling of Earth System Model Fields with Generative Foundation Models

Philipp Hess, Michael Aich, Baoxiang Pan, Niklas Boers

https://arxiv.org/abs/2403.02774

Report on the AAPM Grand Challenge on deep generative modeling for learning medical image statistics

Rucha Deshpande, Varun A. Kelkar, Dimitrios Gotsis, Prabhat Kc, Rongping Zeng, Kyle J. Myers, Frank J. Brooks, Mark A. Anastasio

https://arxiv.org/abs/2405.01822

PANGeA: Procedural Artificial Narrative using Generative AI for Turn-Based Video Games

Steph Buongiorno, Lawrence Jake Klinkert, Tanishq Chawla, Zixin Zhuang, Corey Clark

https://arxiv.org/abs/2404.19721

Vector Quantization for Recommender Systems: A Review and Outlook

Qijiong Liu, Xiaoyu Dong, Jiaren Xiao, Nuo Chen, Hengchang Hu, Jieming Zhu, Chenxu Zhu, Tetsuya Sakai, Xiao-Ming Wu

https://arxiv.org/abs/2405.03110

Synthesizing study-specific controls using generative models on open access datasets for harmonized multi-study analyses

Shruti P. Gadewar, Alyssa H. Zhu, Iyad Ba Gari, Sunanda Somu, Sophia I. Thomopoulos, Paul M. Thompson, Talia M. Nir, Neda Jahanshad

https://arxiv.org/abs/2403.00093

Chinese city governments are offering "computing vouchers", worth $140K to $280K, to AI startups, to help create a level playing field with China's tech giants (Financial Times)

https://t.co/C0ihUB3D8K

"If it does turn out to be anything like human understanding, it will probably not be based on LLMs.

After all, LLMs learn in the opposite direction from humans. LLMs start out learning language and attempt to abstract concepts. Human babies learn concepts first, and only later acquire the language to describe them."

A Domain Translation Framework with an Adversarial Denoising Diffusion Model to Generate Synthetic Datasets of Echocardiography Images

Cristiana Tiago, Sten Roar Snare, Jurica Sprem, Kristin McLeod

https://arxiv.org/abs/2403.04612

OpenAI expands its Custom Model training program with "assisted fine-tuning", letting organizations set up data training pipelines, evaluation systems, and more (Kyle Wiggers/TechCrunch)

https://techcrunch.com/2024/04/04/openai-expands…

Adobe announces Custom Models, to let businesses customize Firefly models, and Firefly Services, a set of 20 generative and creative APIs, tools, and services (Frederic Lardinois/TechCrunch)

https://techcrunch.com/2024/03/26/adob…

Synthesizing EEG Signals from Event-Related Potential Paradigms with Conditional Diffusion Models

Guido Klein, Pierre Guetschel, Gianluigi Silvestri, Michael Tangermann

https://arxiv.org/abs/2403.18486

Better & Faster Large Language Models via Multi-token Prediction

Fabian Gloeckle, Badr Youbi Idrissi, Baptiste Rozi\`ere, David Lopez-Paz, Gabriel Synnaeve

https://arxiv.org/abs/2404.19737 https://arxiv.org/pdf/2404.19737

arXiv:2404.19737v1 Announce Type: new

Abstract: Large language models such as GPT and Llama are trained with a next-token prediction loss. In this work, we suggest that training language models to predict multiple future tokens at once results in higher sample efficiency. More specifically, at each position in the training corpus, we ask the model to predict the following n tokens using n independent output heads, operating on top of a shared model trunk. Considering multi-token prediction as an auxiliary training task, we measure improved downstream capabilities with no overhead in training time for both code and natural language models. The method is increasingly useful for larger model sizes, and keeps its appeal when training for multiple epochs. Gains are especially pronounced on generative benchmarks like coding, where our models consistently outperform strong baselines by several percentage points. Our 13B parameter models solves 12 % more problems on HumanEval and 17 % more on MBPP than comparable next-token models. Experiments on small algorithmic tasks demonstrate that multi-token prediction is favorable for the development of induction heads and algorithmic reasoning capabilities. As an additional benefit, models trained with 4-token prediction are up to 3 times faster at inference, even with large batch sizes.

Generating, Reconstructing, and Representing Discrete and Continuous Data: Generalized Diffusion with Learnable Encoding-Decoding

Guangyi Liu, Yu Wang, Zeyu Feng, Qiyu Wu, Liping Tang, Yuan Gao, Zhen Li, Shuguang Cui, Julian McAuley, Eric P. Xing, Zichao Yang, Zhiting Hu

https://arxiv.org/abs/2402.19009

Generating, Reconstructing, and Representing Discrete and Continuous Data: Generalized Diffusion with Learnable Encoding-Decoding

The vast applications of deep generative models are anchored in three core capabilities -- generating new instances, reconstructing inputs, and learning compact representations -- across various data types, such as discrete text/protein sequences and continuous images. Existing model families, like Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), autoregressive models, and diffusion models, generally excel in specific capabilities and data types but fall short in others.…

AI21 Labs launches Jamba, an AI model that integrates two architectures: transformer and Mamba, which is based on the Structured State Space model (Kyle Wiggers/TechCrunch)

https://techcrunch.com/2024/03/28/ai21-labs-new-text-g…

Analysis: Apple hired at least 36 AI experts from Google and has created a secretive European laboratory in Zurich, to develop new AI models and products (Michael Acton/Financial Times)

https://t.co/Iq7BirU6xu

Equivalence: An analysis of artists' roles with Image Generative AI from Conceptual Art perspective through an interactive installation design practice

Yixuan Li, Dan C. Baciu, Marcos Novak, George Legrady

https://arxiv.org/abs/2404.18385